Recent advancements in AI-driven cybersecurity have led to the development of numerous models that integrate ML and DL for IDS and IPS. However, these models still exhibit limitations in dynamic threat adaptation, FPR, RT, and Computational Overhead (CO). AI-DiD addresses these challenges by integrating multiple AI-driven components within a DiD (Table 1).

Below, this study compares AI-E-DiD with three commonly used AI-integrated security models.

A. AI-IDS.

-

Existing Approach: AI-IDS commonly employ ML classifiers, such as Decision Trees (DT), Support Vector Machines (SVM), or Deep Neural Networks (DNN), to identify anomalous network traffic. These models have proved to improve accuracy in detecting CTI; however, they frequently lack real-time adaptability and still generate a high FPR in dynamic environments61.

-

AI-E-DiD Advantage: AI-E-DiD-based IDS by employing LSTM-AE, which captures sequential dependencies in financial transactions and network traffic. This FPR enhances the AD of APT, which evolves over time.

B. AI-Driven Signature-IDS.

-

Existing Approach: Some AI-enhanced security models incorporate S-IDS combined with AI-powered threat intelligence to classify known attack patterns more efficiently42. However, these models remain limited to detecting pre-defined attack patterns and cannot effectively counter zero-day exploits or emerging attack approaches.

-

AI-E-DiD Advantage: AI-E-DiD overcomes this limitation by integrating Generative Adversarial Networks (GAN) into the AI-E-IPS. GAN generates synthetic attack scenarios, improving the system’s ability to detect previously unseen attack patterns while maintaining a low FPR.

C. Hybrid AI-Driven Security Models.

-

Existing Approach: Some hybrid AI combines supervised and unsupervised learning to enhance AD, utilizing methods such as AE62. While these models can learn from unlabelled data, they typically lack real-time mitigation methods, requiring human intervention for threat response.

-

AI-E-DiD Advantage: AI-E-DiD extends beyond AD by incorporating AI-driven dynamic security policies within an adaptive IPS. The Generative Adversarial Networks-Long Short-Term Memory-Autoencoders (GAN-LSTM-AE) not only detect attacks but also trigger automated response mechanisms to neutralize them in real time.

The AI-E-DiD demonstrates superiority over existing AI-driven CTI by integrating multiple AI components for enhanced AD, automated response, and security adaptation. Unlike traditional AI-E-IDS or S-IDS, AI-DiD provides a real-time, adaptive security mechanism that ensures low FP, effective zero-day attack mitigation, and automated response to evolving threats63.

DiD architecture

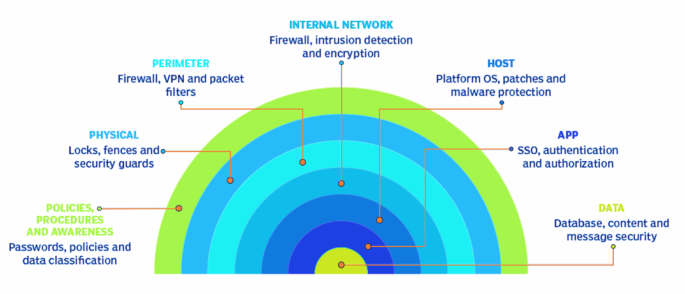

The DiD paradigm is a foundational principle in modern CTI, designed to provide multilayered security across technological, operational, and procedural domains. Rather than relying on a single defensive mechanism, DiD ensures that failure in one layer does not compromise the security of the entire system. This layered method is particularly vital in high-value sectors, such as financial services, where the confidentiality, integrity, and availability of data must be synchronously secured against both external and internal threats.

As illustrated in Fig. 1, the DiD is hypothesized to form a series of concentric defensive rings that collectively protect the system’s core, which comprises sensitive data and critical assets. The outermost layer focuses on human-centric controls such as policies, procedures, and awareness training, which aim to reduce social engineering risks and ensure secure behavior by personnel. Moving inward, network, and edge protection enforces perimeter security through firewalls, intrusion prevention systems, and secure gateways.

The next layer, identity and access management, govern user authentication, authorization, and role-based access control, mitigating risks associated with credential misuse and unauthorized privilege escalation. The threat detection and incident response layer is responsible for proactively identifying malicious activity and ensuring timely containment and mitigation, typically using IDS, Security Information and Event Management (SIEM), and security orchestration tools.

The infrastructure protection layer encompasses measures to secure physical and virtual system components, including servers, routers, storage systems, and hypervisors. Application protection focuses on hardening the software layer through secure coding practices, vulnerability scanning, and runtime protection mechanisms. Finally, at the core of the DiD lies data protection, which includes CTI, data masking, secure backups, and access auditing to ensure that sensitive financial data remains secure even under compromise conditions.

This standard DiD proposes a robust framework to structure security defenses in financial networks. However, traditional DiD implementations are essentially static, frequently requiring manual configuration and lacking the ability to dynamically adapt to evolving CTI such as zero-day exploits or APT. To address these limitations, the proposed AI-E-DiD integrates intelligent, adaptive components across multiple DiD layers—most notably anomaly detection through GAN-LSTM-AE, secure communication via AES-GCM, and real-time threat mitigation by AI-E-IPS.

Proposed AI-E-DiD

The proposed AI-E-DiD extends the fundamental principles of the classical DiD by incorporating AI-driven mechanisms into multiple security layers, enabling adaptive, autonomous, and context-aware AD and mitigation across federated financial networks. As illustrated in Fig. 2, the traditional concentric DiD layers—comprising policies, network and edge protection, identity and access management, AD and incident response, infrastructure defense, application protection, and data security—serve as the base schema upon which AI-E-DiD operates64.

Within this schema, the AI-E-DiD incorporates a multi-stage intelligent security system that continuously monitors, detects, and responds to threats. At the Threat Detection & Incident Response layer, the system employs a hybrid GAN–LSTM–AE, capable of identifying deviations in network traffic and transaction behavior indicative of CTI, including both known and zero-day attacks. This ML core functions as the autonomous AD, supporting continuous surveillance and adaptive threat inference across diverse network behaviors.

Upon AD patterns, threats are classified into one of three predefined categories:

-

Category I: Surveillance, involving early-stage scanning and probing activity targeting network set-up (linked to Network & Edge Protection),

-

Category II: Potential Breach, considered by traffic irregularities and exploitation attempts (Mapped to Identity & Access Management and Threat Detection) and.

-

Category III: Compromised IDS, indicating system takeovers or internal compromise requiring immediate containment (Infrastructure and Application Protection layers).

Following this classification, the Decision-Making Process (DMP) shown in Fig. 3 is initiated. If an attack is confirmed, the AI-E-DiD informs the Security Operations Center (SOC) and initiates multi-stage isolation protocols. These are executed across the infrastructure protection and application security layers, where compromised nodes or services are disconnected from the broader system and remote using AES-GCM encryption and key renegotiation, preserving integrity and confidentiality.

Specifically, the system distinguishes between minor and major threat levels. In low-impact scenarios, automated alerts trigger system isolation and restoration workflows. For severe threats, the model enforces intelligent process-level quarantine, initiates a new AES key exchange, and secures sensitive service flows under isolation until the threat is neutralized. This corresponds to the Application Protection and Data Protection layers of DiD65.

What distinguishes AI-E-DiD from static DiD is its embedded closed-loop learning mechanism. The GAN–LSTM–AE engine continuously retrains on post-incident logs to improve its predictive capabilities, reducing FPR and enhancing DR. This renders the model inherently robust and self-adaptive, capable of evolving with novel attack vectors and emerging behavioral anomalies, thereby strengthening the overall incident response loop.

The Proposed AI-E-DiD Model.

Security decision mechanism.

AI-Driven AD for CTI

The time-series transaction data is preprocessed and forwarded to the designed LSTM-AE. The LSTM-AE is trained using preprocessed data containing standard transaction patterns66. The AD is conducted at the final stage by computing the model’s reconstruction errors on test samples that contain both standard and anomalous transactions (Fig. 4).

Data preprocessing

The process begins with analyzing the dataset to identify patterns and address missing or incorrect data67. Normalization prevents skewed results from non-normal data distributions. The SCI-Kit-learn MinMaxScaler scales the values between 0 and 1, applying the same scaling conditions across training, validation, and testing datasets to maintain consistency.

The normalization EQU (1) is:

$$\:{Z}_{i}=\frac{\left({x}_{i}-\text{M}\text{i}\text{n}\left({x}_{i}\right)\right)}{\left(\text{M}\text{a}\text{x}\left({x}_{i}\right)-\text{M}\text{i}\text{n}\left({x}_{i}\right)\right)}$$

(1)

where,

Missing data points are managed by imputation, where the missing entries are replaced with the median of the corresponding feature. Outliers are identified using the z-score EQU (2).

$$\:{z}_{i}=\frac{{x}_{i}-\mu\:}{\sigma\:}$$

(2)

,

Where,

-

\(\:{z}_{i}\)◊ \(\:z\)-score of a data point.

-

\(\:{x}_{i},\mu\:\)◊Mean.

-

\(\:\sigma\:\)◊c of the feature.

Data points with \(\:z\)-scores outside a specified range (beyond \(\:\pm\:3\)) are flagged as outliers and removed. Handling Categorical Variables (CV) involves transforming these into a statical format that the model can process. It is done using one-hot encoding, where CV, such as protocol types, are converted into binary vectors, EUQ (3)

$$\:\text{H}\text{T}\text{T}\text{P}=[\text{1,0},0],\:\text{F}\text{T}\text{P}=[\text{0,1},0],\:\text{S}\text{M}\text{T}\text{P}=[\text{0,0},1]$$

(3)

LSTM layer

The LSTM is a Recurrent Neural Network (RNN) version incorporating a long-term memory cell to manage data flow and recognize long-term dependencies in time-series financial transactions68. Figure 5 illustrates the model of the vanilla LSTM, featuring a memory cell and three gates: Input Gate (IG), Output Gate (OG), and Forget Gate (FG). These components regulate data flow within the network, deciding whether to retain or discard data based on its relevance50. The LSTM processes each input vector \(\:{{\prime\:}x}_{t}{\prime\:}\) at time \(\:{\prime\:}t{\prime\:}\) by the following steps:

-

The FG decides what data to maintain or remove from the cell state, impacted by the previous hidden state \(\:{H}_{(t-1)}\) and the current input \(\:{\prime\:}{X}_{t}{\prime\:}\), considered as EQU (4) to EQU (6).

$$\:{f}_{t}=\:\sigma\:\left({w}_{f}\left[{H}_{(t-1)},{X}_{t}\right]+{b}_{f}\right)$$

(4)

where,

-

\(\:\sigma\:\)◊sigmoid function.

-

\(\:\{{w}_{f},{b}_{f}\}\) ◊ the weight and bias of the FG.

-

The IG assesses new data’s importance and updates the cell state:

$$\:{i}_{t}=\:\sigma\:\left({w}_{i}\left[{H}_{(t-1)},{X}_{t}\right]+{b}_{i}\right)$$

(5)

And introduces a new memory component:

$$\:{\stackrel{\prime }{C}}_{t}=\:\text{t}\text{a}\text{n}\text{h}\left({w}_{c}\left[{H}_{(t-1)},{X}_{t}\right]+{b}_{c}\right)$$

(6)

Where,

-

\(\:\sigma\:\), tanh◊ The sigmoid and hyperbolic tangent functions.

-

\(\:{w}_{i},{b}_{i},{w}_{c},{b}_{c}\)◊ The respective weights and biases.

-

The OG computes the new hidden state using the updated cell state, as shown in EQU (7) to (8).

$$\:{o}_{t}=\:\sigma\:\left({w}_{o}\left[{H}_{(t-1)},{X}_{t}\right]+{b}_{o}\right)$$

(7)

and

$$\:{H}_{t}={o}_{t}\odot\:\text{t}\text{a}\text{n}\text{h}\left({C}_{t}\right)$$

(8)

where,

-

\(\:\odot\:\)◊element-wise multiplication.

-

The process cycles with the newly computed hidden state \(\:{H}_{t}\) and cell state \(\:{C}_{t}\) serving as inputs for the next unit.

Autoencoder

The model of the AE, presented in Fig. 6, comprises two components: the encoder, which compresses the input, and the decoder, which reconstructs the input from its compressed form69.

-

Encoder: Takes the input data \(\:{\prime\:}x{\prime\:}\) and transforms it into a more miniature, dense representation \(\:{\prime\:}z{\prime\:}\), EQU (9).

$$\:z=f\left(x\right)$$

(9)

Where,

-

\(\:f\)◊ Deterministic mapping \(\:{\mathbb{R}}^{n}\to\:{\mathbb{R}}^{p}\) (with \(\:p<\) \(\:n\) ).

-

Decoder: The decoder part generates a reconstruction \(\:{\prime\:}\stackrel{\prime }{x{\prime\:}}\) of the original input \(\:{\prime\:}x{\prime\:}\) from the latent code \(\:{\prime\:}z{\prime\:}\), EQU (10).

$$\:\stackrel{\prime }{x}=g\left(z\right)$$

(10)

where,

To evaluate the accuracy of the AE, the reconstruction loss is intended using the Root Mean Squared Error (RMSE), EQU (11).

$$\:L(x,\stackrel{\prime }{x})=\sqrt{\frac{1}{n}{\sum\:}_{i=1}^{n}\:{\left({\stackrel{\prime }{x}}_{i}-{x}_{i}\right)}^{2}}$$

(11)

where,

-

\(\:n\)◊The number of samples in the training set.

-

\(\:\{x\), \(\:\stackrel{\prime }{x}\}\)◊The original and reconstructed data.

In this work, the AE reduces dimensionality in transaction data and Feature Extraction, which is fed into an LSTM. After training the model on the transaction dataset, the AD function measures the rebuilt test dataset using the RMSE to identify anomalies.

AES for secure computing

The selection of AES-GCM over alternative encryption modes such as Cipher Block Chaining (CBC) and XEX-based Tweaked CodeBook Mode with CipherText Stealing (XTS) was made based on three key factors: performance, efficiency, and security relevance, particularly for financial services where data confidentiality, integrity, and real-time transaction security are paramount.

A. Performance and CO.

-

AES-GCM presents parallelizable encryption and decryption, unlike AES-CBC, which is inherently sequential and cannot be parallelized due to its chaining mechanism. This feature reduces processing latency in financial transactions where real-time encryption is key.

-

Lower CO: AES-GCM performs encryption and authentication simultaneously, unlike AES-XTS, which focuses primarily on disk encryption and requires additional authentication mechanisms for data integrity verification.

-

AES-GCM leverages hardware acceleration in modern processors (e.g., Intel’s AES-NI), enhancing performance in large-scale financial transactions.

B. Integrated Authentication and Data Integrity.

-

AES-GCM includes GMAC, which provides built-in integrity verification, reducing the need for additional cryptographic functions. This is a significant advantage over AES-CBC, which requires a separate Message Authentication Code (MAC) to ensure integrity, leading to increased computational costs and potential security risks if not implemented properly.

-

Data authenticity and non-repudiation in financial networks is critical to prevent transaction tampering. AES-GCM’s authentication ensures that any alteration in encrypted transactions is immediately detected, reducing fraud risks.

C. Security Advantages in Financial Services.

-

Resistance To Padding Oracle Attacks: AES-CBC is vulnerable to padding oracle attacks, which exploit improper handling of ciphertext padding, making it a less secure choice for online transactions. AES-GCM is immune to such attacks, operating in a stream-like counter mode without requiring padding.

-

Resilience Against Replay Attacks: Financial transactions frequently require strong protection against replay attacks, where encrypted data is intercepted and resent maliciously. AES-GCM employs a unique nonce for each encryption session, ensuring that previously used ciphertexts cannot be replayed.

-

Scalability In Cloud-Based Financial Platforms: AES-GCM supports efficient encryption in cloud computing environments, making it ideal for securing large-scale, multi-user financial networks where low-latency encryption and secure transmission of sensitive data are essential.

AES-GCM was selected over other encryption modes, such as AES-CBC and AES-XTS, due to its parallelizable encryption and decryption, built-in authentication mechanism, and resistance to padding oracle and replay attacks. Unlike AES-CBC, which requires a separate integrity verification mechanism, AES-GCM provides authenticated encryption in a single pass, reducing CO. Moreover, AES-GCM ensures low-latency encryption, making it highly suitable for real-time financial transactions and cloud-based financial services.

AES-GCM operates by integrating encryption and authentication in a structured process. Figures 5 and 6 present the encryption and encryption of AES-GCM.

Encryption process of AES-GCM.

The operational mechanics of AES-GCM are presented below:

-

1

Key Schedule: AES-GCM uses a symmetric key, typically of {128, 192, 256} bits, which is the same key for encryption and decryption processes. The key schedule in AES involves generating a series of round keys from the initial key. These round keys are used in each round of the AES encryption and decryption process.

-

2

Counter Mode for Encryption: AES-GCM utilizes a counter mode (CTR) for encryption, converting the block cipher into a stream cipher. It encrypts input blocks of data by combining them with an encrypted counter. The counter, incremented for each block, is encrypted with the AES key, and the output is XORed with plaintext (PT) to produce ciphertext (CT).

The encryption of a block is represented as EQU (12)

$$\:{C}_{i}={P}_{i}\oplus\:{\text{A}\text{E}\text{S}}_{K}\left({T}_{i}\right)$$

(12)

where,

-

\(\:{P}_{i}\)◊PT block.

-

\(\:{C}_{i}\)◊CT block.

-

\(\:{T}_{i}\)◊counter for block.

-

\(\:\{i\), \(\:K\}\)–◊AES key.

-

3

Authentication Tags via Galois Field Multiplication: The authentication feature of GCM is handled by GMAC, which uses Galois field multiplication to compute an authentication tag.

The tag is computed as follows: EQU (13)

$$\:T={\text{A}\text{E}\text{S}}_{K}(S\oplus\:H)$$

(13)

where,

-

\(\:S\)◊The output of the Galois field multiplication of the encrypted data and any additional authenticated data.

-

\(\:H\)◊A hash subkey derived from the AES key.

-

\(\:\{K\), \(\:T\}\)◊The authentication tag.

Decryption process of AES-GCM.

In the proposed AI-E-DiD, during a transaction, the AES-GCM encryption process secures the data by encrypting it using AES in CTR. Each block of PT data is XORed with an encrypted counter block. To verify the integrity and authenticity of the transaction data, an authentication tag is generated by Galois field multiplication and appended to the CT, EQU (14)

$$\:\text{T}\text{a}\text{g}=\text{G}\text{H}\text{A}\text{S}\text{H}(H,\{C\left\}\right)\oplus\:{\text{A}\text{E}\text{S}}_{K}\left({Y}_{0}\right)$$

(14)

where,

After AD (Fig. 7), AES-GCM ensures that any data processed under these conditions remains untampered. Verifying the authentication tag during decryption ensures that any unauthorized changes to encrypted data are detected immediately. If the tag does not match the expected result, it indicates probable tampering, triggering further security measures.

AI-Enhanced IPS

The AI-E-IPS process begins with data preprocessing, normalization, and cleaning of network traffic data to remove noise and other unwanted elements. This data is then input into a GAN to generate synthetic threat data, which the LSTM-AE then analyzes to identify cyber-attacks AD.

The GAN consists of two main components

Generator and Discriminator.

The LSTM-AE discriminator in this model, while the Generator generates synthetic data that resembles secure network traffic.

-

Generator: The Generator \(\:G\left(z;{\theta\:}_{g}\right)\) generates synthetic network traffic from a noise vector \(\:{\prime\:}z{\prime\:}\) sampled from a probability distribution \(\:{p}_{z}\left(z\right)\), EQU (15).

$$\:{\mathcal{L}}_{G}={\mathbb{E}}_{z\sim\:{p}_{z}\left(z\right)}[\text{L}\text{o}\text{g}(1-D\left(G\right(z\left)\right)\left)\right]$$

(15)

where,

-

\(\:D\left(G\right(z\left)\right)\)◊The output of the LSTM-AE.

-

Discriminator (LSTM-AE): As the Discriminator, the LSTM-AE evaluates the authenticity of the data by checking whether it is real or generated by the GAN, EQU (16).

$$\:{\mathcal{L}}_{D}=-{\mathbb{E}}_{x\sim\:{p}_{\text{data\:}}\left(x\right)}[\text{L}\text{o}\text{g}D(x\left)\right]-{\mathbb{E}}_{z\sim\:{p}_{z}\left(z\right)}[\text{L}\text{o}\text{g}(1-D\left(G\right(z\left)\right)\left)\right]$$

(16)

where,

The LSTM-AE regenerates input sequences and flags anomalies based on reconstruction errors. Over time, the GAN refines its data generation method using feedback from the LSTM-AE, thereby enhancing its ability to detect and avoid known and unknown attacks.

AD approach in AI-E-DiD

The AI-driven AD system in AI-E-DiD utilizes a hybrid LSTM-AE to identify known and unknown CTIs in real time. This model was selected due to its ability to learn temporal dependencies in time-series data, which is crucial for detecting deviations in network traffic patterns and financial transactions.

A. Use of LSTM-AE for AD.

-

LSTM is a type of RNN designed to capture long-range dependencies in sequential data. In cybersecurity, the model can recognize complex attack patterns and distinguish between normal traffic and anomalies over time, thereby enhancing security.

-

AE are DL used for unsupervised learning and designed to rebuild input data and identify deviations. In our model, the AE learns normal transaction patterns, and anomalies are flagged based on rebuilding errors when processing hidden attack data.

B. Preprocessing for Effective AD.

-

Time-series transaction data is pre-processed before being fed into the LSTM-AE.

-

The MinMaxScaler() is applied to normalize the values between 0 and 1, ensuring that all features contribute proportionally to AD.

-

Imputation and outlier handling methods (e.g., median replacement for missing values, Z-score filtering for outliers) enhance data integrity before training.

C. Training and Operation of LSTM-AE in AI-E-DiD.

-

The encoder component of the AE compresses input transaction data into a low-dimensional representation.

-

The decoder reconstructs the input, and the reconstruction error is computed using the RMSE to assess anomalies.

-

Transactions or network activities with high rebuilding errors are classified as anomalies, indicating potential CTI.

-

The model is trained using normal transaction data, ensuring that deviations from expected patterns are detected effectively.

D. Use of GAN for Synthetic Threat Generation.

-

This study integrates a GAN-based approach for generating synthetic attack data to enhance the robustness of the AD system.

-

The GAN consists of a Generator and a Discriminator, where the LSTM-AE functions as the Discriminator, distinguishing real network traffic from GAN-generated synthetic attack data.

-

Over time, the GAN improves its ability to generate realistic CTI, enhancing the LSTM-AE’s capability to detect sophisticated and evolving attacks.

E. AI-E-IPS for Adaptive Security.

-

Once an anomaly is detected, the AI-E-IPS dynamically adjusts security protocols to mitigate attacks.

-

The IPS separates AI segments and prevents unauthorized access by leveraging real-time AD feedback from the LSTM-AE.

Purpose of LSTM-AE instead of traditional ML

Unlike conventional AD, such as Random Forest (RF), SVM, or k-Means clustering, the proposed model with LSTM-AE provides several advantages:

-

Captures Sequential Dependencies – Unlike tree-based models (e.g., RF), LSTM-AE can analyze transaction sequences over time, generating it more effectively for detecting time-dependent CTI.

-

Unsupervised Learning – Many traditional MLs require labelled data, whereas AE works unsupervised, making them suitable for detecting novel, previously unseen attacks.

-

Adaptive Threat Learning – By integrating a GAN-based threat generator, our model can learn from evolving attack methods, unlike static ML, which relies on predefined rules.

link